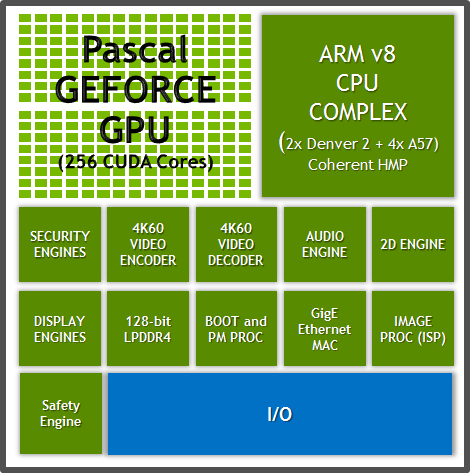

On the compute side, Pascal introduces a new type of FP32 CUDA core that supports a form of FP16 execution where two FP16 operations are run through the CUDA core at once (vec2). On the storage side, Pascal supports FP16 datatypes, with relative to the previous use of FP32 means that FP16 values take up less space at every level of the memory hierarchy (registers, cache, and DRAM). Pascal, in turn, brings with it native support for FP16 compute for both storage and compute. In practice this meant that if a developer only needed the precision offered by FP16 compute (and deep learning is quickly becoming the textbook example here), that at an architectural level power was being wasted computing that extra precision. Prior to these parts, any use of FP16 data would require that it be promoted to FP32 for both computational and storage purposes, which meant that using FP16 did not offer any meaningful improvement in performance or storage needs. Starting with the Tegra X1 – and then carried forward for Pascal – NVIDIA added native FP16 compute support to their architectures. FP16 performance has been a focus area for NVIDIA for both their server-side and client-side deep learning efforts, leading to the company turning FP16 performance into a feature in and of itself.

Speaking of architectural details, I know that the question of FP16 (half precision) compute performance has been of significant interest. FP16 Throughput on GP104: Good for Compatibility (and Not Much Else)

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed